Contents

Get a Personalized Demo

See how Torq harnesses AI in your SOC to detect, prioritize, and respond to threats faster.

92% of security leaders say something is actively reducing their trust in AI within the SOC. These aren’t skeptics, they’re people who have already adopted AI and believe in its ability to enhance security operations. We know from the 2026 AI SOC Leadership Report that AI is already widely adopted in the SOC, with 94% of organizations using it in some capacity.

And yet, there’s still an AI security and trust gap in the SOC. Why?

Confidence Isn’t the Issue. Deployment Is.

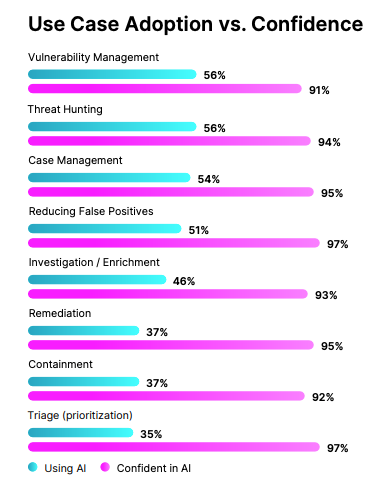

Digging into the data from Torq’s AI SOC Leadership Report, one gap stood out as the most shocking. Across every SOC use case we measured, confidence in AI’s ability to get the job done is nearly universal, ranging from 91% to 97%. CISOs and security leaders aren’t sitting around debating whether AI can handle the work; they know it can. But actual adoption tells a different story.

Vulnerability management and threat hunting lead AI adoption metrics at 56% each. Followed by case management, reducing false positives, investigation, and remediation. What was surprising is that triage is the least deployed AI use case, with only 37% adoption — even though triage is arguably the most obvious fit for AI. SOC teams are overwhelmed with massive amounts of false-positive–riddled alerts, making triage one of the most repetitive and time-consuming tasks analysts face.

If the use case best suited for AI in the SOC is the one organizations have been slowest to adopt, what does that say?

When we dove deeper into each use case, the responses helped pinpoint exactly what challenges SOC teams were experiencing that led to the adoption vs. confidence gap. The top response for triage was the need for too much human review (34%); for investigation, manual enrichment (32%) and unreliable conclusions (31%) were neck and neck; and for response, the most common answer was lack of trust (33%).

It’s not a capability problem. It’s a lack of trust in the products themselves.

What’s Actually Reducing Trust in AI?

When we asked 450 CISOs and security leaders this question, the answers weren’t what you might expect (or maybe they were, given how universal they were). Nobody led with “I’m worried that AI will take my analysts’ jobs” or “I’m not comfortable with the idea of autonomous remediation”. These are the answers other vendors are telling you to have, but the reality is, the top concerns were far more fundamental than that, and included:

- Data privacy concerns: 45%

- False negatives (missed threats): 40%

- Data governance: 37%

- Black-box AI: 32%

Looking at these four top concerns together paints a pretty clear picture. Security leaders aren’t questioning whether or not AI works; they’re asking:

- What data is AI accessing?

- What is AI doing with that data?

- Why is AI making the decisions it’s making?

When we break down the responses by seniority level, the story remains the same. The top concerns surrounding AI in the SOC were:

- Executives: False negatives

- VPs: Data privacy

- Directors: Black-box AI

- Senior Managers: Loss of control

These responses aren’t contradictory;they’re all expressions of the same need: visibility and control at every level.

What Would Build Confidence in AI in the SOC?

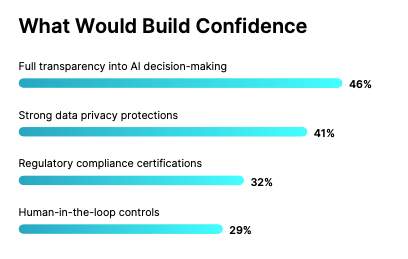

We asked what was reducing trust in AI, so it only made sense to ask what would build that confidence too and the answers were just as telling.

Security teams aren’t looking for less AI; they are looking for more visibility into the AI they already have. They want to understand the planning and reasoning that goes into agentic execution. They want to be able to report to their executives that the AI solutions they’ve invested in are protecting their data and meeting their organization’s unique regulatory and compliance requirements.

And most importantly, they want to maintain the flexibility of human-in-the-loop control. Not human intervention at every step, but the ability to control and customize where and when human analysts should step in, either as overseer or final decision maker. High-severity incident with a critical system on the line? Humans make the call. Low-severity, high-confidence attack pattern? AI handles end-to-end.

Rearchitecting AI for Security and Trust

90% of security leaders say that explainable AI decisions are critical to a true AI SOC platform. The current gap between confidence and deployment exists because too many AI SOC solutions can’t provide the type of transparency that builds trust. As a result, SOC teams are spending their time double-checking AI decisions, doubling the work, and not realizing the time savings that AI in the SOC was intended for.

A true AI SOC platform needs to inherently answer the simple questions that SOC teams are asking — what tools is the AI accessing, what data is the AI looking at, and why did the AI reach the conclusion it did? Until those questions have clear, verifiable answers built into the platform architecture, the ceiling on AI expansion in the SOC isn’t the technology. It’s trust.

What Transparent AI Looks Like

The Torq AI SOC Platform was built with these concerns in mind. We understand the importance of transparency in building trust in human-AI collaboration. Here’s how the Torq AI SOC Platform addresses each one directly.

Declarative instruction: Torq HyperAgents™ work under your explicit direction. You give each agent a role, an objective, behavioral guidelines, and specific instructions. You define the tools that they can use (as broadly as a workflow or as granularly as a single step), the data they can access, and the decisions they are authorized to make. Control is built in from the start, not bolted on as an afterthought.

AI reasoning and output visibility: Every agentic action is documented in a transparent timeline view that maps the reasoning leading to each execution. Analysts aren’t left guessing why a verdict was reached, or what evidence supports a specific conclusion. The planning, reasoning, and execution are reviewable and structured for human validation — in real time — with manual override always available.

Immutable audit logs: Every AI decision, action, and reasoning chain is recorded and uneditable. Not just for compliance purposes, but because auditability is what builds trust in AI across the organization. When a CISO asks “What did the AI do, and why?”, the answer is already written, traceable, and defendable.

Human-AI collaboration: Torq Socrates coordinates the full platform, with humans on the loop by design. Response actions can execute completely autonomously for high-volume, high-confidence scenarios or with human-in-the-loop confirmation when severity or business context demands it. Analysts set the boundaries and build in off-ramps for human intervention, while Socrates documents and learns over time. As confidence in AI grows, SOC teams can grant greater autonomy across day-to-day use cases. Trust is earned, after all.

The Confidence SOC Teams Need

The #1 confidence booster in A isn’t more features or better algorithms — it’s transparency. Show how AI reached its decisions, and teams will trust it more. Give them the ability to dial autonomy based on context, and they’ll grant more of it. AI security and trust come down to architecture, not marketing. A true AI SOC platform is built for trust from the inside out.

For more on how the Torq AI SOC Platform is the only enterprise-ready AI SOC that security leaders can actually trust, check out the complete blog series below.