Contents

Get a Personalized Demo

See how Torq harnesses AI in your SOC to detect, prioritize, and respond to threats faster.

TL;DR

- IT automation isn’t about replacing your team — it’s about stopping them from spending their best hours on work that never required human judgment in the first place. Provisioning, access requests, onboarding checklists: these should run themselves.

- The difference between basic task automation and true IT workflow automation is platform depth. Connecting dozens of systems, enforcing security guardrails, and handling real-world complexity — conditional logic, exception handling, human-in-the-loop approvals — requires more than a point solution.

- Torq gives IT operations teams enterprise-grade automation infrastructure that powers the world’s most sophisticated security teams — with the integrations, AI-driven decision-making, and governance controls to match.

IT teams aren’t overwhelmed because the work is hard. They’re overwhelmed because the work is endless. Provisioning requests. Access queues. Onboarding checklists duct-taped across a dozen disconnected systems. None of it requires a skilled engineer — it just requires one to be available. And available, at enterprise scale, means buried. That’s not an IT problem. That’s an automation problem.

IT automation changes that equation. When done right, it doesn’t just speed up existing processes — it fundamentally transforms how IT operations run, what your team focuses on, and how securely and efficiently your organization scales.

This is what modern IT process automation looks like, why it matters, and how solutions like Hyperautomation are enabling enterprises to get there faster.

What Are IT Automation Tools?

IT automation tools are software platforms that execute IT processes and workflows with minimal or no human intervention. Instead of a technician manually stepping through a ticket, an automated workflow handles the trigger, the logic, the cross-system actions, and the outcome — consistently, at scale, and at machine speed.

This spans a wide range of IT processes: access provisioning, employee lifecycle management, service desk requests, compliance documentation, software deployment, system configuration, and more. The common thread is that these are high-volume, rule-based processes where manual execution creates bottlenecks, inconsistencies, and risk.

IT automation can be narrow (automating a single repetitive task) or expansive (orchestrating complex, cross-functional workflows across your entire technology stack). The difference between those two ends of the spectrum is the platform you build on.

What IT Automation Tools Are Not

IT automation tools are not meant to replace IT professionals. They’re about redirecting them. When your team isn’t spending half their day provisioning accounts, chasing approval chains, or resetting passwords, they have the bandwidth to tackle the work that actually requires their expertise.

It’s also not a “set it and forget it” proposition — at least not at the enterprise level. Effective IT workflow automation requires thoughtful design, strong governance, and a platform that can handle real-world complexity: conditional logic, exception handling, human-in-the-loop checkpoints, and cross-system integrations that actually hold up in production.

What Are the Benefits of IT Automation Tools?

Efficiency and Time Savings

The most immediate impact of IT automation tools is time — specifically, time reclaimed from repetitive, low-value tasks. Consider what a typical IT team handles on any given day: access requests, onboarding and offboarding workflows, software installations, password resets, compliance checks. These tasks are necessary. They are not, however, a good use of skilled engineers.

Automated IT software executes these workflows in a fraction of the time, without the delays introduced by manual handoffs, approval queues, or business-hour dependencies. Access provisioning that once took three to five days can be completed in minutes. Help desk tickets that piled up in queues get resolved — or never generated in the first place — through self-service automation.

Improved Security Posture

Manual processes are inherently inconsistent. When a human executes a workflow, there’s variance: steps get skipped, exceptions get made informally, and documentation lags. Automation enforces consistency. Every workflow runs the same way, every time, with a full audit trail.

This matters especially for access management. Departing employees who retain system access after their last day represent a real, well-documented security risk. Automated offboarding eliminates that window entirely. Just-in-time (JIT) access workflows ensure that elevated permissions are granted only when needed and revoked automatically when the need expires — reducing your standing attack surface without creating operational friction.

Scalability and Integration

IT operations teams don’t scale linearly with headcount. As organizations grow, there are more employees, more systems, and more complexity — the volume of IT work grows faster than any team can manually absorb. Automation is the only way to scale IT operations without increasing costs in proportion.

The right IT automation platform doesn’t operate in isolation. It connects across your full technology stack: HR systems, identity providers, cloud platforms, SaaS applications, communication tools, and ticketing systems. That integration depth is what separates a narrow automation tool from a true IT automation solution — and it’s what enables the kind of cross-functional, multi-step workflows that drive real operational transformation.

How Do You Build a Roadmap for IT Automation?

Enterprises rarely achieve full IT automation in a single initiative. The organizations that get there do so in stages — building confidence, expanding scope, and deepening integration as they go. Here are some stages of IT automation success.

Phase 1: Quick Wins

Start with high-volume, low-complexity processes where the ROI is immediate, and the risk of getting it wrong is low. Password resets. Software access requests. Basic onboarding task lists. These are workflows your team executes dozens of times per week, where automation delivers instant time savings and a clear proof of value.

This phase is also about building the organizational muscle for automation: getting stakeholders aligned, establishing governance practices, and proving the concept internally before expanding scope.

Phase 2: Intermediate Automation

Once your team has initial wins under their belt, move into more complex, multi-step workflows that span multiple systems. Employee onboarding and offboarding is a prime example — it touches HR platforms, identity providers, communication tools, cloud applications, and more. Automating it end-to-end requires integration depth and workflow logic, but the payoff is significant: faster time-to-productivity for new hires, fewer access errors, and dramatically reduced IT overhead.

This phase also introduces more sophisticated patterns: conditional branching, approval routing, exception handling, and human-in-the-loop checkpoints for decisions that still warrant human judgment.

Phase 3: Full Orchestration

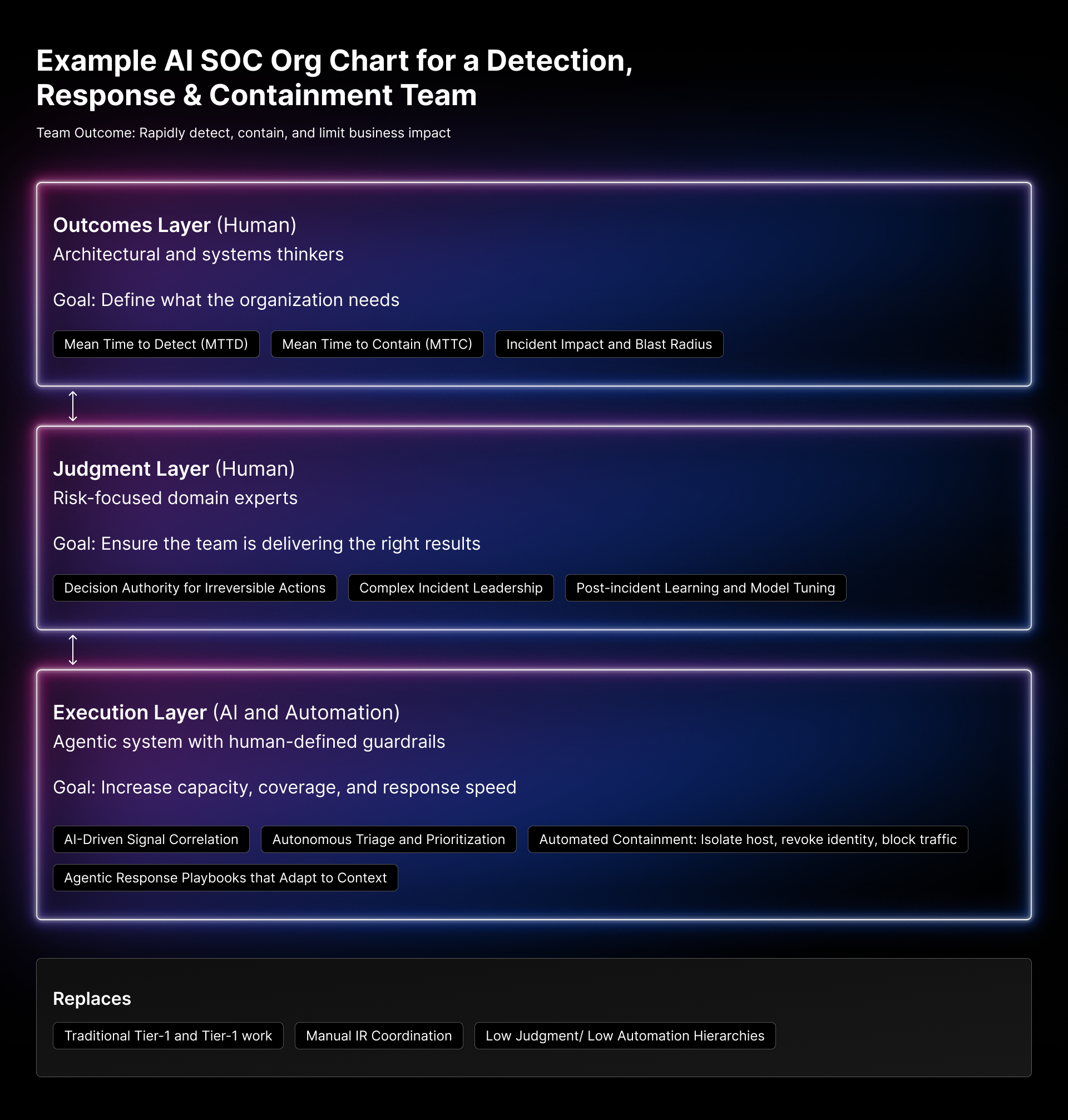

At the enterprise level, IT automation becomes Hyperautomation — the orchestration of complex, cross-functional workflows across security, IT, DevOps, and HR. This isn’t just automating what humans do today. It’s enabling systems to analyze context, make risk-based decisions, and act autonomously on complex data — so humans can intervene precisely when and where they add the most value.

This phase requires a platform built for enterprise-scale complexity: deep integration capabilities, strong security guardrails, agentic AI that can reason through multi-step decisions, and governance controls that keep automated processes auditable and compliant.

Common IT Automation Use Cases

Employee Onboarding and Offboarding

Manual identity lifecycle management is one of the most consequential inefficiencies in enterprise IT. Fragmented systems, manual coordination, and inconsistent processes — these create security vulnerabilities, compliance gaps, and a bad experience for the employees on both ends of the workflow.

Automated onboarding and offboarding orchestrates the full identity lifecycle: provisioning accounts across every relevant system, enforcing role-based access policies, generating compliance documentation, and — critically — executing offboarding the moment an employee departs, with no delay and no manual steps that could be missed.

Just-in-Time Access

Standing privileges are a persistent security liability. Users accumulate elevated permissions over time — permissions that remain active long after the operational need expires. JIT access automation flips this model: permissions are granted on demand, scoped to what’s actually needed, and automatically revoked when the window closes.

This reduces your attack surface without slowing down operations. Employees get access when they need it, through familiar self-service channels, without waiting for a manual approval chain.

Self-Service Employee Chatbots

Most IT help desk tickets are routine. Access requests, software installations, password resets, and account unlocks — these don’t require a skilled engineer. They require a reliable process. Self-service employee chatbots and automation deliver that process through channels employees already use: Slack, Microsoft Teams, and web forms.

The result is a dramatically lower ticket volume for IT teams and a dramatically better experience for employees who get their requests resolved in minutes instead of days.

How Do You Choose the Right IT Automation Tools?

Not all IT automation platforms are built the same. Evaluating them requires clarity about what you actually need — today, and as your operations scale.

Evaluating Maturity and Needs

Start with an honest assessment of your team’s current state. What processes are consuming the most time? Where are the most common points of failure or inconsistency? What does your integration landscape look like, and how complex are the workflows you want to automate?

Teams early in their automation journey often benefit from starting with a platform that offers both low-code accessibility and the depth to grow with them — so they’re not rearchitecting their automation stack eighteen months in. The right IT automation solution meets you where you are and scales to where you need to go.

Governance and Security Considerations

Automation amplifies whatever governance practices you have in place. If access controls and credential management are weak, automating workflows on top of that foundation makes the problem worse.

The platform you choose needs to take security seriously — not as a feature, but as a foundation. That means strong role-based access controls for the automation platform itself, encrypted credential management, comprehensive audit logging, and human-in-the-loop checkpoints for high-stakes actions. An automated workflow that grants privileged access to sensitive systems cannot be built on a flimsy foundation.

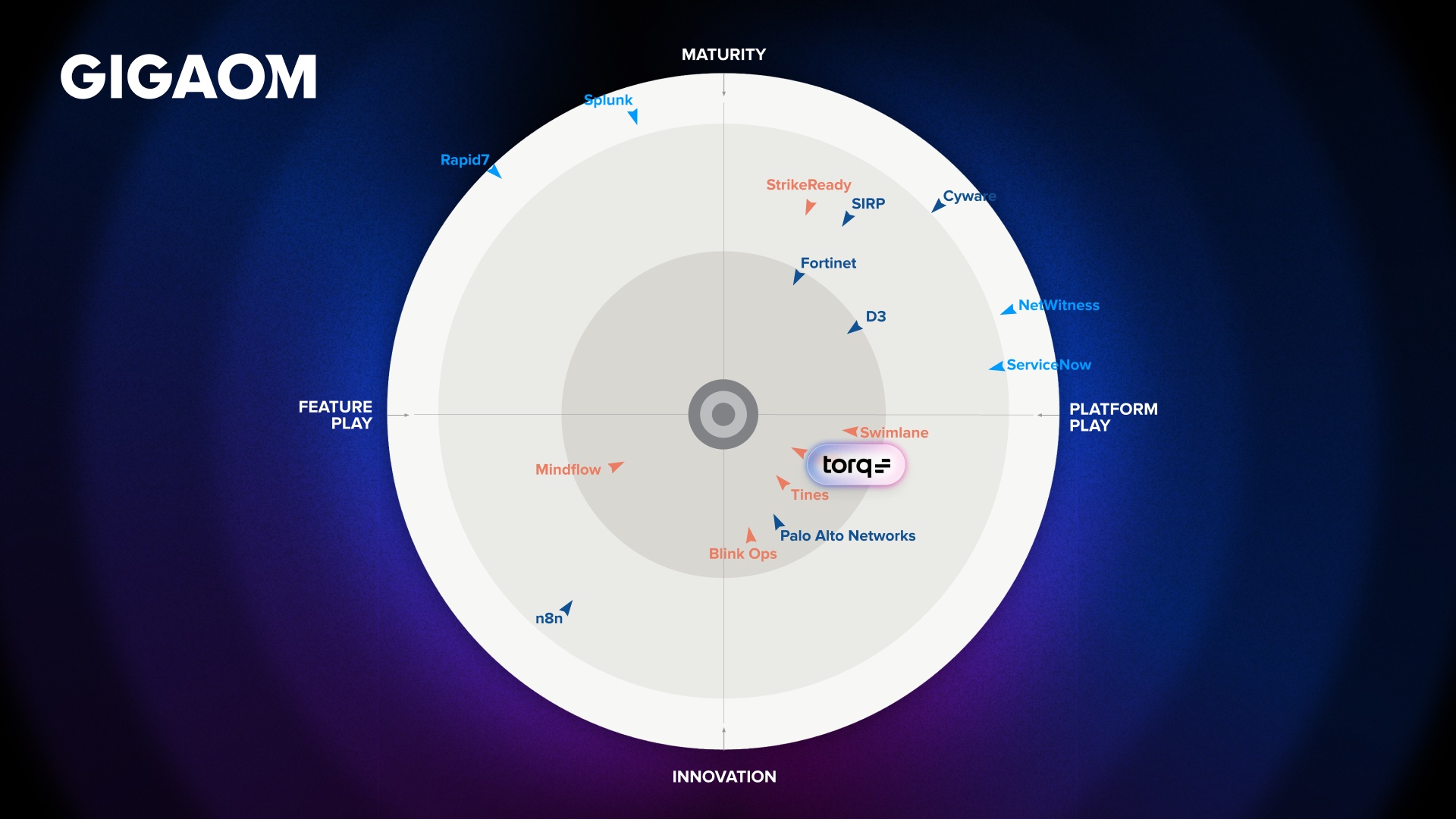

Why Torq Is the IT Automation Platform Enterprises Choose

The Torq AI SOC platform, powered by Hyperautomation™, supports enterprises that need IT automation to operate at the same level of rigor, scale, and security as their most critical business systems.

The platform connects SecOps, IT, DevOps, and HR through 300+ integrations and 4,000+ out-of-the-box actions — eliminating the visibility gaps and manual handoffs that come from siloed operations. It supports the full range of IT automation patterns: simple task automation, complex multi-step workflows, AI-driven decision-making, and human-in-the-loop approvals. And it does all of this without compromising on the security guardrails that enterprise operations demand.

For IT teams, this means automated employee onboarding and offboarding that reduces identity management costs by 60% and cuts access errors by 99%. It means just-in-time access workflows that eliminate standing privileges and provision access 70% faster. And it means self-service chatbots that reduce help desk ticket volume by up to 70% while giving employees a better experience.

IT automation isn’t a future capability. It’s a present-day competitive advantage — and the gap between organizations that have it and those that don’t is widening fast.

See how Agoda automated phishing response, password resets, and cloud security workflows with Torq.

FAQs

IT automation tools are software platforms that execute IT processes and workflows with minimal or no human intervention. This includes access provisioning, employee onboarding and offboarding, service desk requests, and compliance documentation — high-volume, rule-based processes where manual execution creates bottlenecks, inconsistencies, and security risk.

A common example is automated employee onboarding. When a new hire is added to an HR system, an automated workflow provisions their accounts across every relevant platform — email, Slack, cloud applications, identity providers — assigns role-based access, and generates compliance documentation, all without a single manual step from IT.

IT teams are consistently asked to do more with the same or fewer resources. IT automation tools are the only way to scale operations without increasing headcount in proportion. Beyond efficiency, they improve security by enforcing consistent processes, reducing human error, and freeing skilled engineers to focus on work that actually requires their expertise.

The best candidates are high-volume, repetitive, rule-based processes — ones that follow a predictable path and don’t require nuanced human judgment on every instance. Employee onboarding and offboarding, access provisioning, just-in-time access requests, password resets, and help desk ticket routing are all strong starting points.

IT automation tools enforce consistent execution of security-sensitive workflows, eliminating the variability that comes with manual processes. Automated offboarding ensures departing employees lose access immediately with no gaps. Just-in-time access provisioning eliminates standing privileges. Comprehensive audit logging provides the documentation that compliance and security teams require.